.png)

Capital One - Supplier Risk Dashboard

Unified dashboard ends error-prone tool-hopping and gives users a clear starting point.

Role:

Duration:

Tools:

Impact:

Problem:

Supplier teams bounced between portals, emails, spreadsheets, wasting time and missing urgent risks.

Solution:

One dashboard centralizes most crucial data and risk signals on login, while guiding each user's next action.

.png)

NOTE

Visuals are simplified recreations designed to respect NDA boundaries while accurately reflecting design decisions and outcomes.

Problem

Even after the launch of a unified supplier data platform, usage data and feedback showed gaps in adoption and impact, leading to the use of workarounds that ate up time and left room for human error..

Two user patterns stood out:

Didn't see the value

Some users didn’t believe the platform reduced effort — so they stuck with old habits, like pulling reports manually or checking systems individually.

Didn't know where to start

Others arrived but felt disoriented. The landing page displayed only pinned suppliers and pending activities — with no guidance on what mattered or how to extract meaningful value.

Goal

- Increase adoption so efficiencies and data accuracy pay off.

- Lay out a clear path to the right data—no hunting.

- Turn data consolidation into visible efficiency, not a lateral move.

Research

We started out by assessing the limitations of the platform’s existing entry experience, in order to see what opportunities existed for the users who didn't know what to do when arriving at the platform.

.png)

Original landing page showing pinned suppliers and pending activities, requiring prior system knowledge to navigate effectively.

In exploring how we might address that group's needs, we surfaced a broader opportunity: a redesigned landing view could support both groups, not just by orienting them — but by actually making the system faster, clearer, and more actionable for those who didn't perceive any efficiency gains.

That pivot shifted the focus from clarity alone to efficiency and prioritization. A dashboard could offer a clear starting point for disoriented users and tangible time savings for users who didn't see the benefit of using the platform — surfacing what mattered most, right away.

Done well, that kind of entry experience could exceed the platform’s original goal: not just improving visibility, but helping users spot issues faster, reduce manual effort, and make smarter supplier decisions at scale.

To guide that redesign, we focused on the supplier management department — the platform’s core user group. We chose to start with Accountable Executives (AEs), who sat in the middle of the hierarchy: senior enough to need a macro view, but close enough to day-to-day issues to feel the friction firsthand. A dashboard built for them could scale both upward and downward — informing leaders while supporting the people handling approvals and supplier comms.

user interviews

I conducted nine 1:1 interviews with Accountable Executives (AEs) in the supplier management group to understand:

- What kinds of data they relied on most

- Where friction or blind spots slowed them down

- What improvements would make their daily workflows faster and more reliable

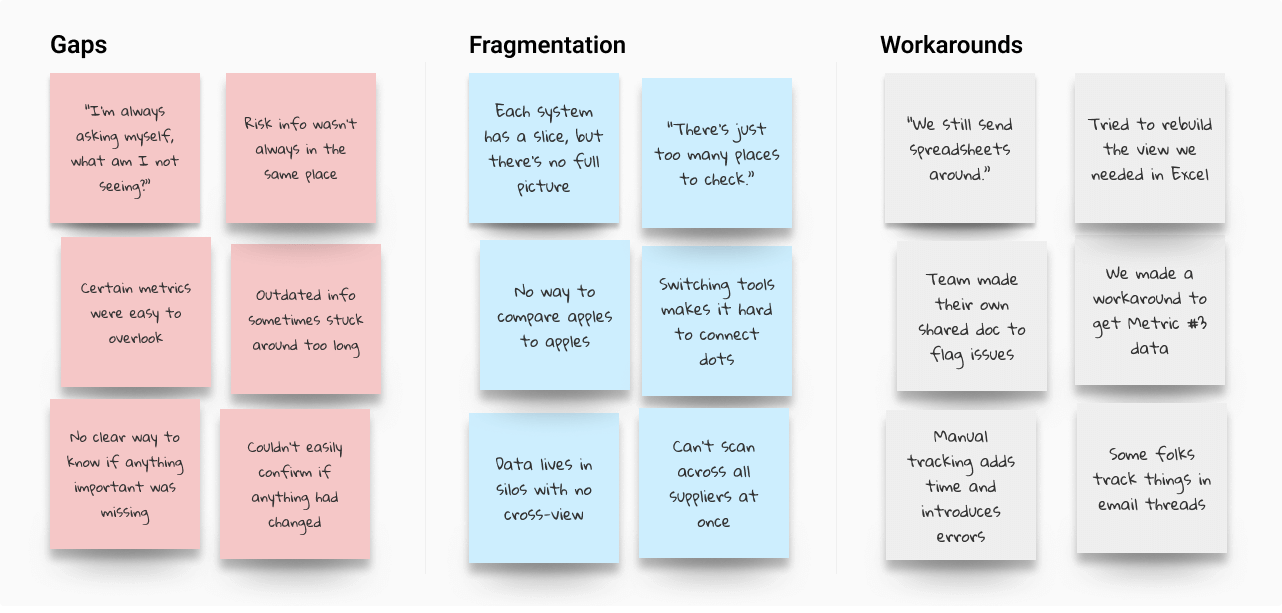

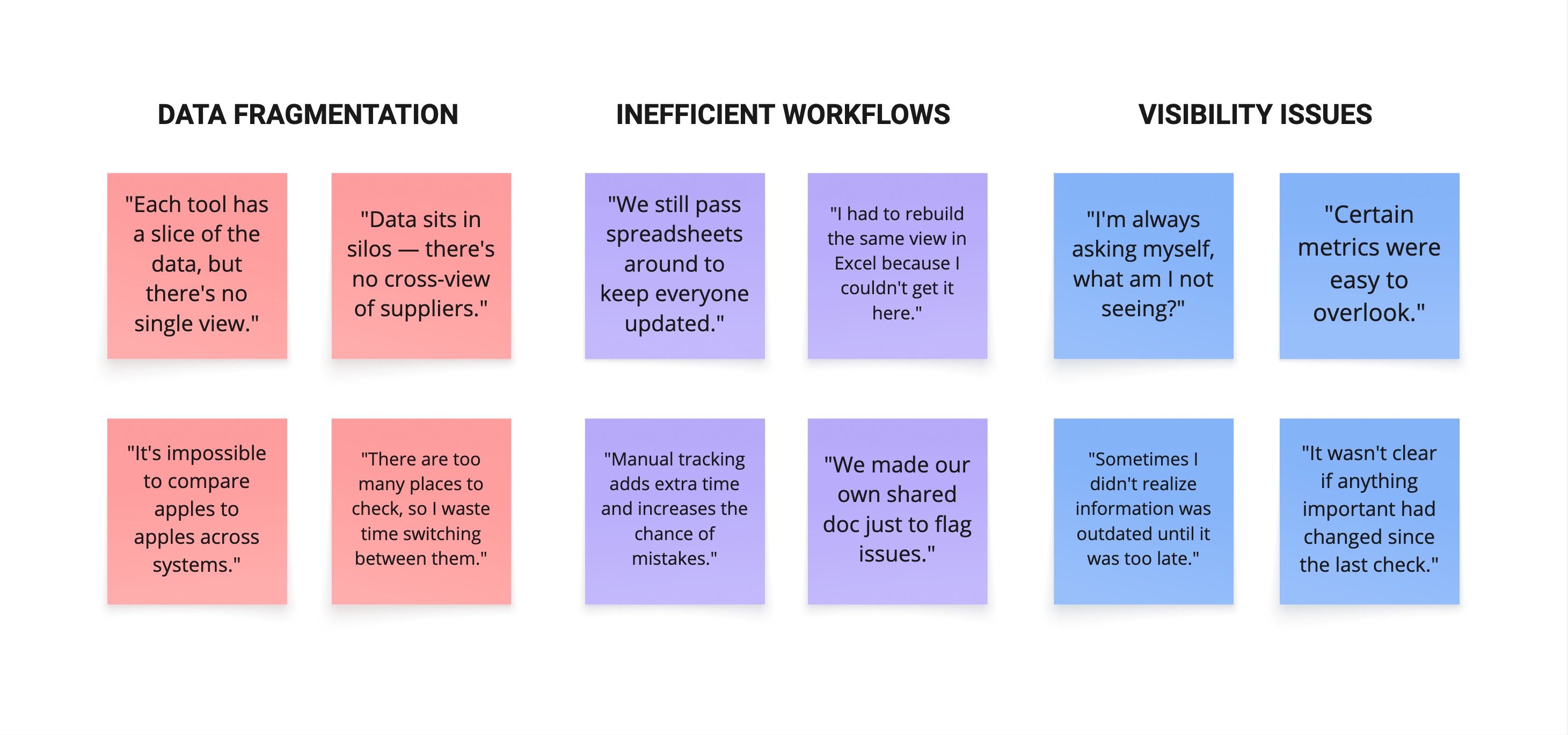

To synthesize what I heard, I created an affinity map to identify major patterns across participants. I grouped the themes into three clusters—gaps in visibility, fragmented data, and manual workarounds—all pointing to the same broader problem.

Grouped interview insights into three categories: gaps, fragmentation, and workarounds, each showing how risk was amplified across the workflow.

While those clusters captured the shape of the problem, risk — whether general or tied to specific metrics — dominated across all of them. It came up far more often than any other theme.

Other

Nothing else came close. Risk was the dominant theme.

"What fires do I need to put out?"

That anxiety around risk shaped how AEs approached nearly every aspect of their work — from where they looked for answers to how they made decisions. The following insights illustrate how that pressure showed up in practice:

Gaps in Visibility

With supplier data spread across tools, users worried something important might fall through the cracks.

.svg)

Fragmented Data

It was hard to spot trends or systemic issues when each supplier and metric lived in a separate tool, view, or workflow.

Manual Workarounds

Some tracked data manually using spreadsheets or email threads — adding friction and undermining confidence in the data itself.

AEs also consistently prioritized eight key risk metrics for assessing individual suppliers, with four of those metrics also providing critical portfolio-wide insight. While Portfolio Health and Supplier Risk drove the core design focus, Contract Approvals emerged as a distinct “quick win” — not because of supplier performance, but because approval delays, often buried in emails, created downstream risk and were relatively easy to surface with the right dashboard hooks.

Because risk showed up across every theme — not just in metrics, but in the stress of the workflows themselves — we knew the dashboard had to surface that risk clearly and make it actionable. That meant prioritizing visibility at both the portfolio and individual levels, so users could catch issues early, act with confidence, and reduce the chance of things slipping through.

These findings shaped the dashboard’s structure from the start — turning risk clarity, not just data access, into the foundation of the design.

Wireframes

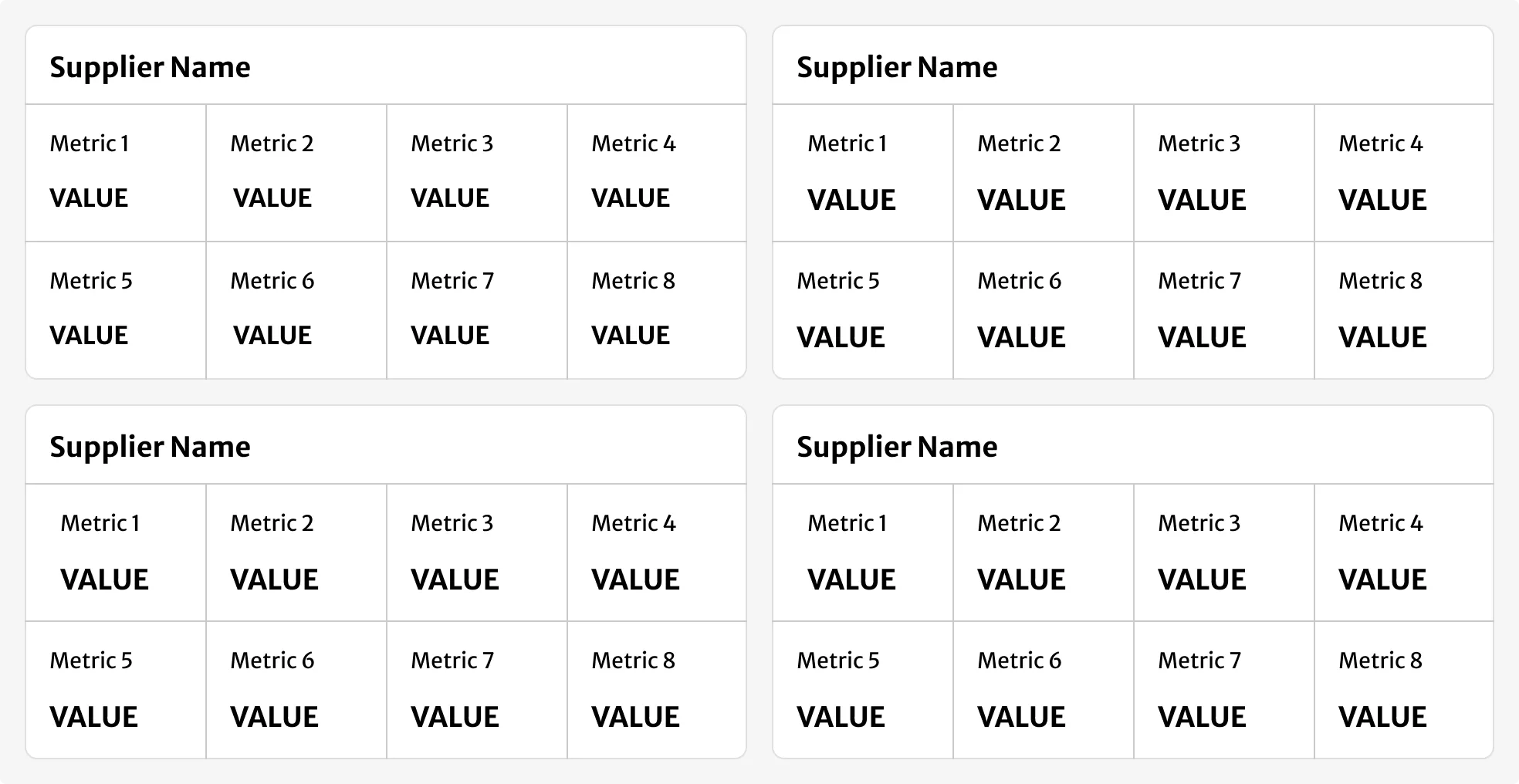

To evaluate layout directions, I started with a scorecard-style layout for individual supplier data, based on early stakeholder ideas and internal Capital One components.

The format was easy to read but didn’t scale for the volume and density our users needed.

Card-style layout based on early stakeholder direction. Built using Capital One’s internal components. Clear at small scale, but hard to scan and inefficient at volume.

I moved toward a table format to support faster scanning, comparison, and interaction across large datasets.

.png)

Early table structure. Better for comparison and navigation, but lacked cues and controls for prioritization.

Design

Before and After

The original interface showed only pinned suppliers and pending tasks — offering limited guidance on what to do first and no easy way to dive deeper. The new layout surfaced key risk signals and supplier priorities upfront, combining macro trends and individual supplier data in a single, scannable view. This saved time, reduced the risk of missing something important, and helped prevent human error.

.webp)

Only showed pinned suppliers and pending tasks, offering little direction and no way to see broader risk patterns.

.png)

Surfaces key risk signals and supplier priorities in a single table view, enabling faster triage and deeper insights without switching pages.

Layout Overview

I structured the dashboard around the dense “megatable” layout that displayed supplier-level metrics in a single, scrollable view. Each row showed key risk signals, interaction hooks, and priority flags for a given supplier, allowing users to assess, compare, and act without navigating between pages. This layout supported high-volume scanning and became the foundation for all subsequent interaction and design decisions.

%202.png)

Key risk signals, supplier-specific data, and action triggers in a single, high-density interface — enabling faster triage, comparison, and confident next steps without switching views.

Summary Graphs

To support pattern recognition at scale, I added four graphs above the table showing portfolio-wide risk trends using the four key metrics identified in the interviews. These gave users a quick read on system health and made it easier to spot concerning shifts without manually inspecting rows. Charts were created and implemented using enterprise-standard third-party software. For clarity in this case study, axis labels are omitted — the actual product included interactive hover states.

%202.png)

High-level visuals made it easier to scan for system-wide red flags — before diving into individual suppliers.

Interaction Elements

Because a dense table can quickly feel overwhelming, I added a few small touches to keep things clear and actionable. These details made it easier for users to stay oriented and work faster.

.png)

Key Interaction Enhancements

1

Supplier Count Confirmation

Displayed the total number of monitored suppliers so users could immediately tell if any were missing.

2

Approvals Shortcut

Before, users had no easy way to see how many approvals were pending and had to go to another system to act. I added a persistent button with a counter, so they could jump straight to the right spot with one click.

3

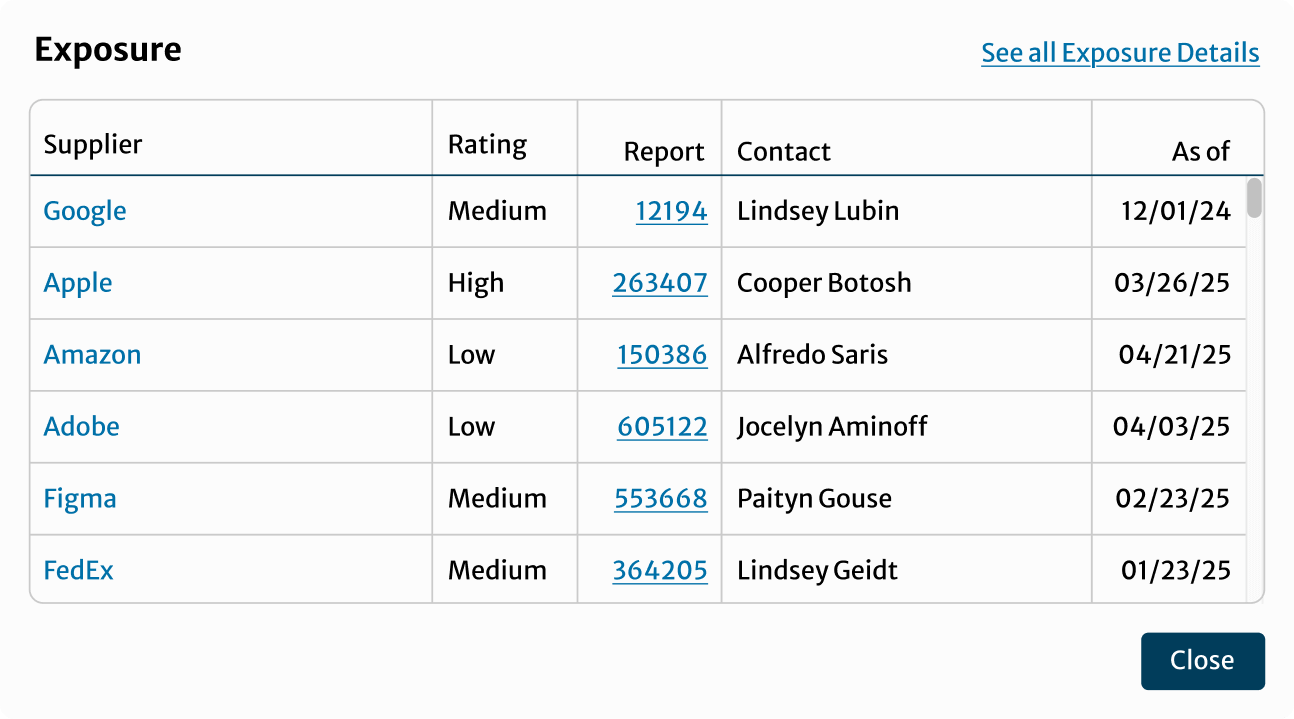

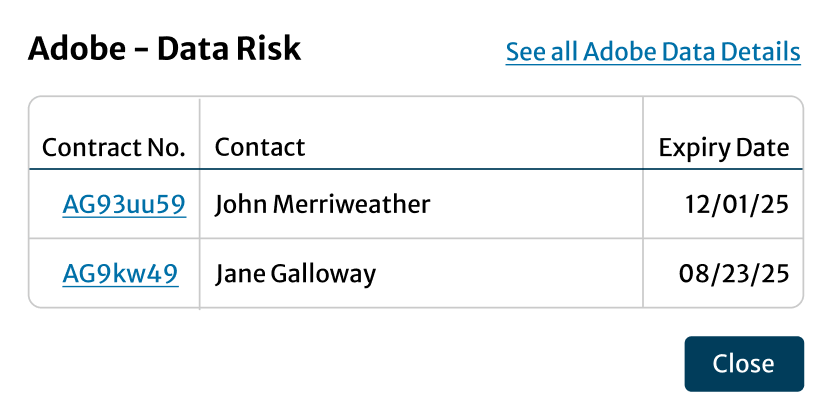

More Details Icons

Clicking an icon next to any summary graph opened a modal showing the suppliers behind that trend — connecting macro risk patterns to the specific vendors driving them.

4

Filters and Sorts

With hundreds of rows to sift through, users needed more control over what they were seeing. I added flexible filters and sortable columns so they could focus on what mattered most — without needing to export the data.

5

Escalated High-Risk Indicators

“There’s just so much data. If something’s really wrong, I need to see it fast.”

Because users were scanning quickly and juggling other tasks, we reserved red exclusively for critical risk. This visual priority helped the most urgent issues stand out, reducing the chance something serious would be missed.

Example modal opened from a summary graph, surfacing portfolio-level ratings for a specific risk metric.

Example modal opened from the megatable, showing supplier-specific risk details and linked case information.

Design Trade-offs

Several decisions shaped the final layout:

- Scorecards vs. Table

Scorecards were more readable but didn’t scale. The table handled comparison better in a high-volume setting. Without the table, teams would have kept to their inefficient workarounds. - Modals vs. Separate Pages

Modals preserved context. I added deep links in the modals when even more detail was needed, navigating to existing pages in the platform in a new tab. Separate pages would have forced a new tab for every supplier, turning a 30-row scan into 30 back-button trips. - Red Cell Alerts vs. Multi-Color Graphs

Colors were used to signify risk levels in the graphs, but I removed those so red table cells could stand out clearly as risk signals. If both graphs and table used colour, real risk would blend in and busy users might miss critical suppliers.

Without this structure, users would’ve had to export the data and piece together risk signals manually — slowing them down and increasing the risk of human error.

Testing

Since we didn’t have time for formal usability testing, we treated the pilot as our real-world test bed. It let us see how people actually used the dashboard day-to-day.

After securing signoff from SMEs that the design met functional and compliance requirements, we launched to 30 pilot users across key teams.

After two weeks, we sent out a quick survey:

- Was anything unnecessary or unclear?

- Was anything missing?

- Was anything confusing?

We also opened a dedicated Slack channel for informal feedback. This helped capture nuance — including edge cases, real usage stories, and unexpected needs across roles.

All responses were added to a shared tracker, then reviewed with the product and engineering teams for feasibility and prioritization.

Excerpt from the pilot feedback tracker showing a sample of accepted, deferred, and future design suggestions.

Most feedback reinforced that the dashboard offered real value — but I still made three key refinements based on the user reactions.

Each fix was relatively lightweight but made the experience more resilient at scale — and validated that even informal, real-world testing could surface the changes that mattered.

Download Icons

Added export options to portfolio graphs so users could include them in decks without taking screenshots.

Secondary and Tertiary Sort

Introduced secondary and tertiary default sort logic to keep high-priority suppliers consistently near the top.

Clarifying Tooltips

Added hover definitions for risk terms unfamiliar to adjacent roles outside the core AE group.

Outcomes

- +41% weekly active users in the first month.

- Pilot users report it “saves us hours every week” hunting data and chasing pending contracts.

- Adoption spread beyond Accountable Executives: Legal and Vendor-Risk teams now start their day in the dashboard.

This dashboard makes so many tasks easier for so many roles.”

— VP from pilot group

Lessons

- Clarity beats complexity.

A dense back-end can still feel simple when risk and next steps surface first. - Quick wins earn bigger budgets.

Export icons and tooltips built trust for deeper systemic fixes. - Show the big picture and the next action in one place.

Portfolio-level trend graphs plus row-level risk flags let multiple roles act without switching views.

.svg)

AI Today

This project wrapped only a few months before AI research tools became available at Capital One. At the time, clustering dozens of user quotes into themes was entirely manual — a process that often took hours of sorting and re-checking.

If I approached the same task today, I would use Miro AI to accelerate early synthesis. With one click, the same raw quotes are automatically grouped and labeled into draft themes (example below). While I’d still refine the clusters to ensure accuracy and relevance, AI provides a starting point in seconds instead of hours — letting me focus more quickly on framing the real problems and testing solutions.

Takeaway: AI doesn’t replace design judgment, but it changes the pace of early research. It turns what used to be a time sink into a quick draft, leaving more energy for the parts of design that require human insight.

%202.png)